You’ve probably had that moment: you take a photo, stare at it a little too long, and wonder why one angle feels “right” while another feels strangely unfamiliar. The curiosity isn’t vanity so much as a desire for clarity—a calmer explanation of what you’re seeing. That’s the mindset I had when I tried AI Face Rater: not to chase a perfect score, but to understand how facial symmetry, spacing, and proportions can be measured consistently—without the mood swings of human feedback.

What surprised me most wasn’t the number. It was how the tool framed the result as something you can learn from—a structured readout you can compare over time.

Why “Face Rating” Tools Feel So Personal (Even When They’re Just Math)

A face score lands differently than a step count or a sleep grade. It touches identity. That’s why the best way to approach an AI rater is to treat it like a ruler, not a judge.

A ruler can still be useful:

- It makes comparisons easier (today vs. last month).

- It turns vague impressions (“this photo looks off”) into testable variables (lighting, angle, expression).

- It gives you a repeatable baseline.

But it also has limits:

- A ruler can’t measure style, charisma, or the story your face tells in motion.

- It can’t capture cultural context or personal preference.

- It can’t tell you what should be beautiful—only what aligns with certain measurable patterns.

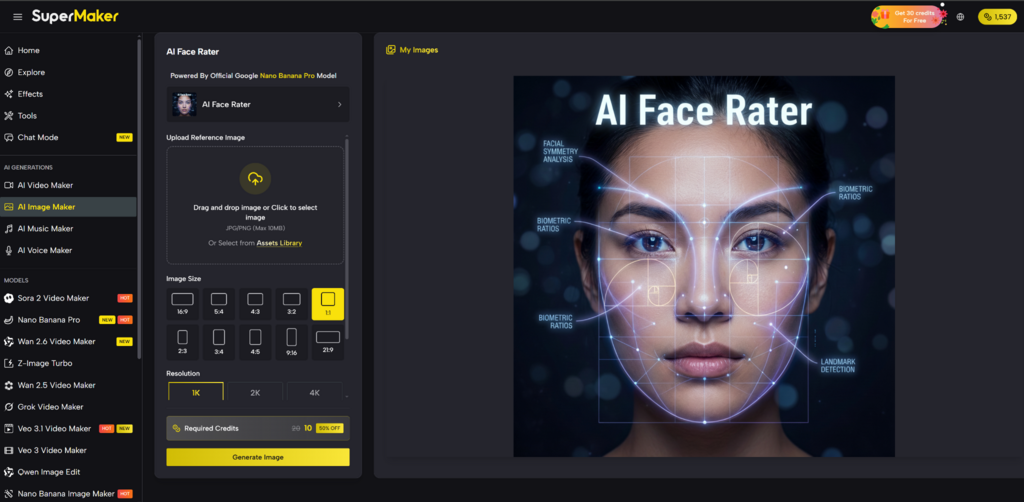

How SuperMaker’s AI Face Rater Works (In Plain English)

At a high level, the workflow looks like this:

- You upload a clear selfie (JPG/PNG, up to 10MB).

- The system detects facial landmarks (key points around eyes, nose, lips, jawline).

- It runs geometric measurements and proportion checks (including symmetry and ratio alignment).

- It generates:

- An overall score

- A descriptive explanation of why that score was assigned

- A report-like breakdown you can review and save

SuperMaker presents this as an image-to-image style process, and the page notes it’s powered by an official “Google Nano Banana Pro” model. In practice, what you feel as a user is simple: upload → wait briefly → read something that looks like a structured evaluation rather than a random “hot or not” verdict.

My Quick Test: What Changed the Score More Than I Expected

I tested with two photos taken the same day:

- Photo A: front-facing, even lighting, neutral expression

- Photo B: slightly angled, overhead light, faint smile

The difference wasn’t dramatic—but it was consistent with what you’d predict from landmark-based systems:

- Overhead lighting changed shadow shape near the eyes and cheekbones.

- A slight head tilt shifted perceived symmetry.

- A smile subtly changed distances around the mouth and cheeks, which can affect ratio calculations.

My takeaway: the tool didn’t feel like it was “judging me.” It felt like it was judging the photo conditions—and that’s exactly how you should use it if you want a fair baseline.

What Makes This Different From “Random Score” Face Apps

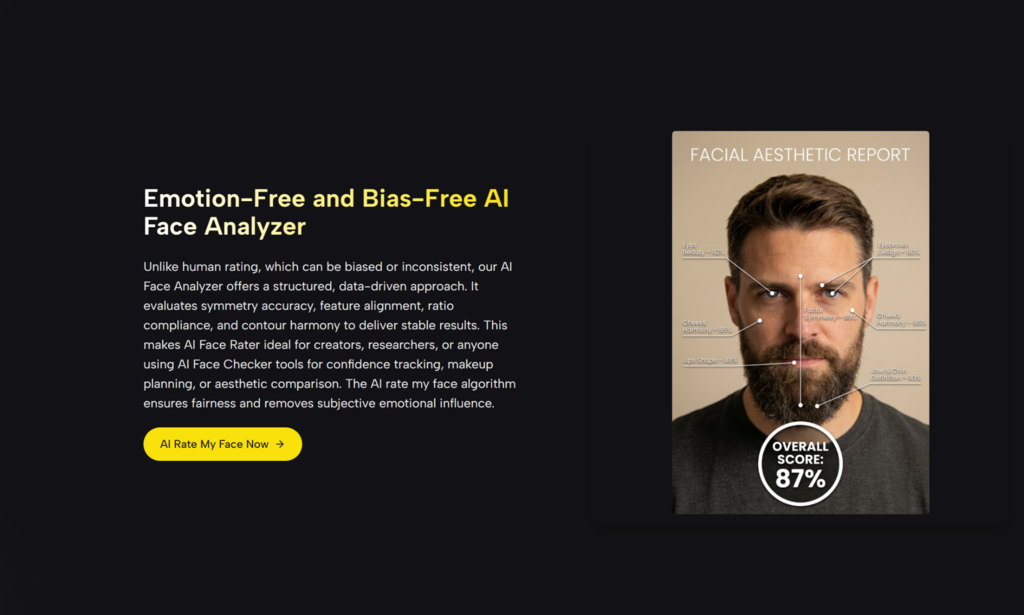

A lot of face rating experiences feel like entertainment first and analysis second. SuperMaker’s approach leans more “explainer.”

Here’s a practical comparison:

| Comparison Item | SuperMaker AI Face Rater | Typical Face Rating Apps | Human Opinions (Friends/Comments) |

| Consistency | Repeatable scoring based on landmark geometry | Often inconsistent or opaque | Highly variable (mood, bias, context) |

| Explanation | Descriptive breakdown of features and proportions | Usually minimal or generic | Often vague (“you look good here”) |

| Useful for Tracking | Strong: compare scores across time/photos | Mixed: entertainment-driven | Weak: memory-based, inconsistent |

| Controls | Lets you choose image size, resolution, number of images, and visibility options | Usually limited controls | Not applicable |

| Privacy Model | Claims photos are processed securely and deleted after analysis | Depends, often unclear | Depends on where you share |

Where the “Science” Is Helpful—and Where It Can Mislead

It’s true that facial symmetry and proportionality have been studied for a long time in aesthetics, art, and perception research. Modern AI systems often formalize this with landmark detection and deep-learning models trained on large datasets.

Still, it’s important to hold two ideas at once:

- Measurement can be real: symmetry, spacing, and ratios can be computed reliably.

- Meaning can be fragile: turning those measurements into “attractiveness” is influenced by culture, dataset design, and subjective labeling.

If you want a neutral, non-marketing perspective on the broader field, there are open-access academic papers discussing CNN-based attractiveness scoring and how factors like facial expression can confound results. Reading one of those alongside any tool keeps your expectations grounded.

How to Get More Accurate Results (Without Overthinking It)

The tool itself suggests what most landmark systems need: clarity and consistency.

Best practices that worked for me

- Even, soft lighting (window light beats overhead light)

- Front-facing angle for baseline tests

- Minimal filters (filters can distort geometry and texture cues)

- Neutral expression if you want the most stable comparison

A simple tracking routine

- Take one baseline selfie (same spot, same time of day).

- Test variations (smile vs neutral, left vs right angle).

- Save the report and compare patterns, not just scores.

Limitations You Should Expect (So the Tool Feels More Trustworthy)

No good AI tool is “effortless magic,” and face raters are no exception.

1. Results can vary with photo quality

If your image is blurry, heavily filtered, or badly lit, the landmark detection step can become less stable—so the score may shift for reasons unrelated to your face.

2. Expression and pose can change geometry

A smile, raised eyebrows, or a slight head rotation can alter measured distances. That doesn’t mean you changed—just the geometry the model sees.

3. One score can’t represent your real-world presence

People aren’t experienced as still images. Movement, voice, style, and confidence don’t fit neatly into a ratio table.

4. Dataset bias is a real concern in this category

Any system trained on human-labeled “attractiveness” can inherit cultural preferences or demographic skews. The best mindset is to treat results as one lens, not a final verdict.

How I’d Use AI Face Rater in Real Life

Not for obsession. Not for validation. For feedback loops.

Practical uses

- Testing which lighting setup makes photos look more balance

- Comparing makeup/hairstyle choices in a measurable way

- Tracking changes during fitness or skincare routines

- Content creation: “photo experiments” with a consistent scoring method

And honestly, the most valuable outcome I got was psychological: the score mattered less than the realization that tiny photo variables can change perception dramatically. That made me less harsh on myself and more curious about what the camera is actually doing.

A Gentle Way to Interpret Your Results

If your score is higher than expected

Enjoy it—but don’t assume the number is your identity. It may reflect a great photo setup.

If your score is lower than expected

Before you doubt yourself, retest with:

- better lighting

- front-facing angle

- neutral expression

- no filters

Often, the “improvement” comes from controlling conditions, not changing your face.

The Real Promise: Not “Beauty,” but Clarity

Used thoughtfully, SuperMaker’s AI Face Rater feels less like a ranking machine and more like a structured feedback tool: landmark mapping, geometric measurement, ratio-based evaluation, and a readable breakdown you can actually learn from.

If you treat it as a mirror with a measuring tape—rather than a judge with a gavel—you’ll get the best version of what it can offer: calm, repeatable insight into how a selfie is being interpreted, and what you can change if your goal is better photos, not a perfect face.