The primary challenge in contemporary digital communication is the inherent static nature of traditional visual assets, which often fails to capture the fluid dynamics of a brand’s narrative. While a high-resolution photograph can freeze a moment in time, it frequently leaves the audience disengaged in a fast-paced media environment where motion is the primary driver of attention. This friction between frozen imagery and the need for cinematic movement creates a significant hurdle for creators who lack the resources for full-scale video production. By integrating Image to Video AI into the professional toolkit, storytellers can bridge this gap, transforming inert graphics into atmospheric sequences that retain their original visual integrity while gaining a new dimension of temporal engagement.

This shift toward generative motion is not merely a stylistic choice but a response to the evolving psychology of digital consumption. In my observation, the human eye is naturally programmed to prioritize movement over static objects, a trait that modern marketing strategies must leverage to remain competitive. The complexity of synthesizing realistic motion—ensuring that shadows, textures, and perspectives remain consistent—has long been the domain of high-end visual effects studios. However, the emergence of sophisticated generative models has democratized this capability, allowing for a level of creative experimentation that was previously cost-prohibitive.

The Synergy Of Leading Generative Engines For Realistic Motion Synthesis

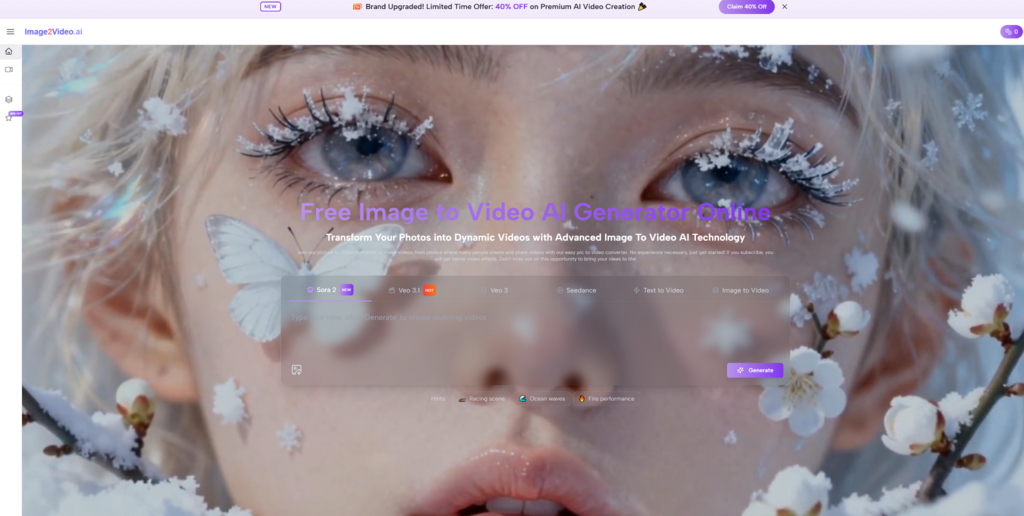

The current landscape of image-based animation is defined by the convergence of several high-performance models, each contributing a specific strength to the final output. Platforms that succeed in this space do so by aggregating top-tier engines like Sora 2, Veo 3.1, and Seedance 2.0. In my testing, the integration of these models allows the system to interpret the 3D volume of a 2D image with remarkable precision. Sora 2, for instance, is noted for its ability to maintain character consistency, while Veo 3.1 excels in rendering environmental lighting. This multi-model approach ensures that when a user initiates an Image to Video conversion, the result is not just a simple pan or zoom, but a physically plausible simulation of reality.

Technical consistency is the benchmark of professional-grade generative tools. One of the most common issues in AI-generated content is “morphing,” where objects lose their shape during movement. By leveraging the latest updates in model architecture, the system minimizes these artifacts, providing a stable foundation for marketing and social media assets. My observation is that the latest iterations of Seedance 2.0 have significantly improved the way the AI handles complex textures like water, smoke, and human skin, making the transition from a photo to a five-second cinematic clip feel seamless and intentional.

Evaluating The Technical Performance Of High Fidelity Video Generation Systems

When analyzing the performance of these tools, it is crucial to look beyond the surface aesthetics and evaluate the underlying stability of the generated frames. Professional creators require a system that understands the relationship between foreground and background elements. For example, if a camera pan is requested, the AI must correctly calculate the parallax effect—where objects closer to the camera move faster than those in the distance. In my testing, the high-fidelity outputs from current leading models manage this spatial logic with a high degree of accuracy, which is essential for maintaining the “suspension of disbelief” required for cinematic storytelling.

However, the effectiveness of the output is heavily influenced by the quality of the initial input and the specificity of the descriptive prompt. While the AI is capable of extraordinary feats, it remains a collaborative tool that thrives on clear direction. A vague prompt may result in unpredictable motion, whereas a detailed description of lighting, speed, and direction allows the engine to align its generative capabilities with the creator’s vision. This collaborative dynamic is the future of digital content creation, where the role of the creator shifts from manual labor to high-level direction and curation.

Practical Execution Steps For Transforming Static Graphics Into Cinematic Clips

The process of generating high-quality motion is designed to be streamlined, focusing on user intent rather than technical complexity. Following these official steps ensures the most consistent results across various image types.

- Step 1: Resource Initialization. Upload your high-quality JPEG or PNG file to the processing interface. The AI uses this as the “anchor” for all subsequent frame generation.

- Step 2: Natural Language Direction. Provide a prompt that describes the intended motion. For example, “Slow cinematic zoom on the subject with realistic lens flare and soft background blur.”

- Step 3: Multi-Model Processing. The system engages its generative engines to calculate frame-by-frame movement. This typically takes approximately 5 minutes, depending on the complexity of the requested motion.

- Step 4: Review and Export. Once the status displays “Completed,” preview the 5-second MP4 video and download it for use in your chosen digital channel.

Comparative Analysis Of Generative Motion Capabilities And Output Quality

To understand the value of these advanced tools, it is helpful to compare their technical output against traditional animation methods.

| Evaluation Category | Traditional Static Processing | Generative AI Synthesis |

| Motion Depth | 2D Manipulation Only | Full 3D Physics and Parallax |

| Texture Stability | Static / No Change | Dynamic Interaction with Light |

| Creation Speed | Manual Keyframing (Hours) | Automated Generation (Minutes) |

| Format Flexibility | Fixed Dimensions | Universal MP4 Output |

In my observation, the ability to control camera movement—such as pan, zoom, tilt, and rotation—is what truly elevates the Photo to Video process. These controls allow a creator to direct the audience’s gaze, much like a cinematographer on a film set. However, users should be aware of current limitations: the maximum video length is five seconds, and background audio must be added via external software, as direct audio file integration is not currently supported by the primary generative interface.

Future Trends In Generative Video And Professional Content Strategy

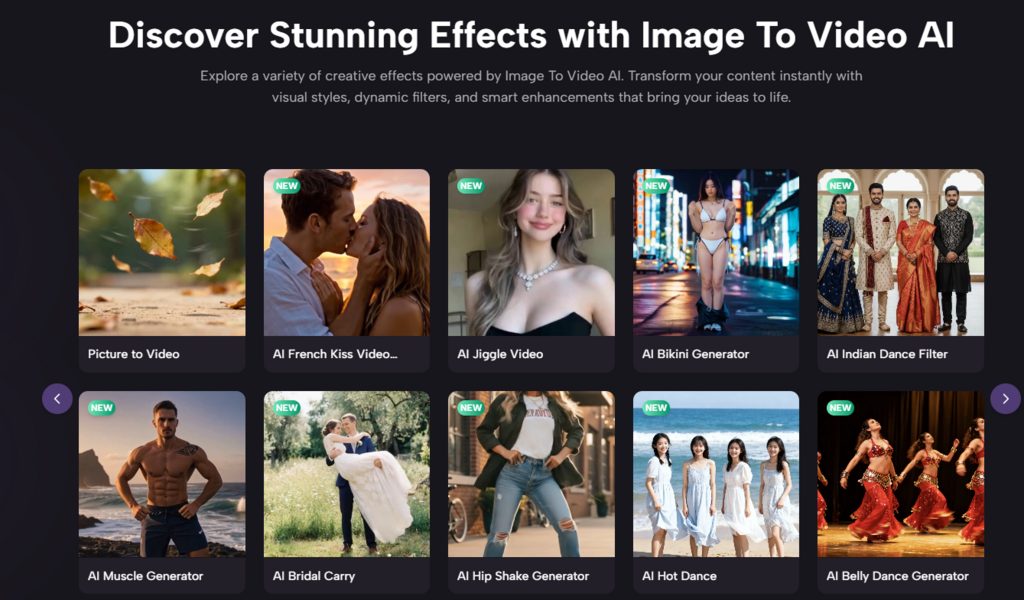

As generative technology continues to advance, the boundary between professional cinematography and AI-assisted creation will become increasingly blurred. The industry is moving toward a future where “editing as easily as photos” is no longer a marketing slogan but a functional reality. By mastering these tools today, creators can stay ahead of the curve, producing high-volume, high-quality content that meets the demands of modern digital platforms. The potential for rapid iteration means that a content strategy can evolve in real-time, testing different motion styles to see which resonates most with the target audience.

Ultimately, the goal of using advanced motion synthesis is to enhance the emotional resonance of a story. Whether it is a product being showcased in 360 degrees or an old family photograph being brought back to life, the movement adds a layer of humanity and presence that a still image simply cannot provide. While the tools are becoming more automated, the soul of the content still resides in the creator’s vision. As we look toward the next iteration of models like Sora and Veo, the possibilities for visual storytelling are virtually limitless, provided we approach them with both technical curiosity and a critical eye for quality.